Q: Hi Marvin! You’ve been on board with PreviewLabs for a few months now, as an intern. What have they put you up to these past few weeks?

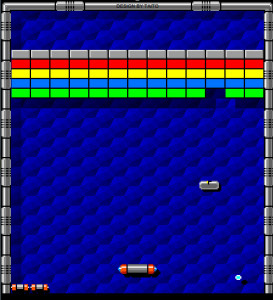

I’ve recently been working on a research project called AR Kanoid. The concept is about playing an augmented reality version of Arkanoid in three-dimensional space. In this concept, you move around in a room, holding a tablet in front of you as if it’s a paddle which you’d use to bounce back virtual balls which are flying towards you. The virtual environment would be visualized on the tablet’s screen.

In this concept, you move around in a room, holding a tablet in front of you as if it’s a paddle which you’d use to bounce back virtual balls which are flying towards you.

My primary focus was figuring out the technical demands that would need to be met in order to track the position of the tablet in the room, which would make this possible. So in other words, the past weeks have been about trying to create a proof of concept.

Q: Why was this project chosen for development? Was there anything that made it particularly interesting?

We wanted to find a way to accurately detect the position of a smart device in a room. Our reasoning here was that, if we could track the position of a tablet accurately, it would be possible to add virtual objects to a real time camera feed. Successful completion of such a prototype would open up a bunch of new possibilities, and we would create an innovative type of gameplay to boot!

To allow tracking the position of the tablet, we decided to hang up marker images on the walls of the room, to be recognized by the tablet’s camera. The idea is that if the software knows where in the room these markers are positioned, the position of the tablet can be derived from the distance and angle at which the different markers can be seen in the camera image.

Well, I had never really worked on a similar project before. That made me wonder whether I had the capacity to make something like this. These doubts reduced when I figured out that Vuforia – an SDK we were depending on heavily in this project – wasn’t as complex as I initially thought. Still, it was quite a learning process!

Q: Are there any obstacles you didn’t expect?

Yes – at first, I didn’t get the SDK to work on my mac. It then took me a while to realize that I misinterpreted the way Vuforia picks up markers. I was able to make some headway once I figured that out. In addition, I’ve been dealing with some challenging bugs.

Q: So how did you deal with these hindrances?

Fortunately, with the help of Jannes, we got the SDK working fairly quickly. It took me about a day of trial and error. After that, the most difficult problem was finding out how to best put all of the markers in a network, so that each of them was placed in the scene where they were supposed to. Since smart devices are the target platform here, we had to make it fairly efficient. We couldn’t afford to make it too computationally expensive. That led me to redesign the way these networks were built multiple times until I found a way that worked.

Q: Any obstacles that proved to be difficult to solve?

Yes; there was one major issue: The tracking of the markers lacked accuracy to such an extent, that we had to halt the development of the prototype. This issue can be split up into multiple problems. For one, it always took a few frames to get a fix on the position of newly detected markers. During that short period of time, the markers’ position behaved erratically. That could be fixed by cutting out those first frames. However, we had the same issue with the last few frames before a marker left the field of detection. In that case, the same fix couldn’t be applied: we couldn’t cut out the last few frames because we’re working in real-time. Seamlessly cutting out the last few frames necessarily implies we have to work with a delay, which would cripple the gameplay as we intended it. In addition, it proved to be very challenging to track markers from greater distances, as it became difficult to tell different markers apart. The markers need to be detailed in order to work with the Vuforia SDK, but unfortunately, those same details are harder to pick up when the distance to the camera is increased. It’s a bit of a catch-22.

Clearly, Marvin has hidden aspirations of becoming an interior designer

Q: What would you say your takeaway from this project is?

It’s important to have a working prototype in an early stage, before adding complexity. Validating the core idea is essential.

First of all, I learned that it’s important to have a working prototype in an early stage, before adding complexity. Validating the core idea is essential – if that doesn’t work, it’s time to reevaluate the way things are going. Second, I initially spent too much time on the UI, which necessarily changed a lot in the course of the project. It’s better to invest in the UI when you’re certain what functionality it should cover. Another thing that stuck with me is that sometimes you just hit a dead end. It’s important to keep some perspective, so you’re able to decide when it’s time to take on a different path.

Q: Alright. So what’s next for you?

Currently, I’m getting acquainted with the Unreal engine. It’s nice to work with something that comes with extensive documentation. That’s something that I was missing a bit when working on the last project, which caused me to roam around in the dark at times. Right now, I’m having fun tossing stuff around with the HTC Vive in an Unreal level. I’m figuring out how to implement some functionality, which I will then demonstrate in an example level. Maybe AR Kanoid wasn’t meant to be, but who knows, VR Kanoid may be a whole different story.